By Ivano Magnifico, Product Manager AUTOMA

From the presentation “Back to the future: when the past is already the future”

SMART GRID DAYS 2025, 8 – 9 October 2025.

Are we using the data we receive from the monitoring systems of cathodic protection as we should? To understand this, let’s summarise the history, the current situation and the future of pipeline monitoring, particularly focusing on what we take for granted and what seems normal because we see it every day.

In this article and the next, we will talk about the monitoring methods and how it is possible to optimise data transmission.

With this content, we are mainly addressing foreign readers, who have different management practices than those we have in Italy. However, in any case, the recap can also be useful for us Italians to see if we are working to the best of our abilities.

Definition of Remote Monitoring

UNI EN ISO 15589-1:2017 proposes this definition of remote monitoring: “At a minimum, remote monitoring must provide the same level of information obtained by cathodic protection operators in the field”.

What does this mean? The “minimum” is a precise measurement taken at the same frequency with which a technician can go out into the field to carry out checks. Relying solely on this standard means taking things a bit too literally: you can imagine what it means to take a precise measurement every six months, considering everything that can happen in the meantime.

There is no definition of remote monitoring in the NACE standards. However, there is a working group that has the task of drafting the MR21551 standard on remote monitoring. When this standard is drafted, you will find that there is some reference to what we do in Italy.

RMU vs Datalogger

When we limit ourselves to what the standard requires, we are faced with a contrast between what a remote monitoring unit (RMU) does, which takes measurements from time to time, and what a data logger does, which analyses the effects of interferences with high-frequency measurements. Normally, one faces a dilemma: which one to choose?

If we choose a remote monitoring unit, we limit ourselves toperiodic measurements withlow transmission requirements, but we forego high-frequency sampling. If we choose adata logger, we will have high sampling frequencies and an assessment of transienteffects, but data retrieval will be difficult and usually done manually, as the device does not have remote access.

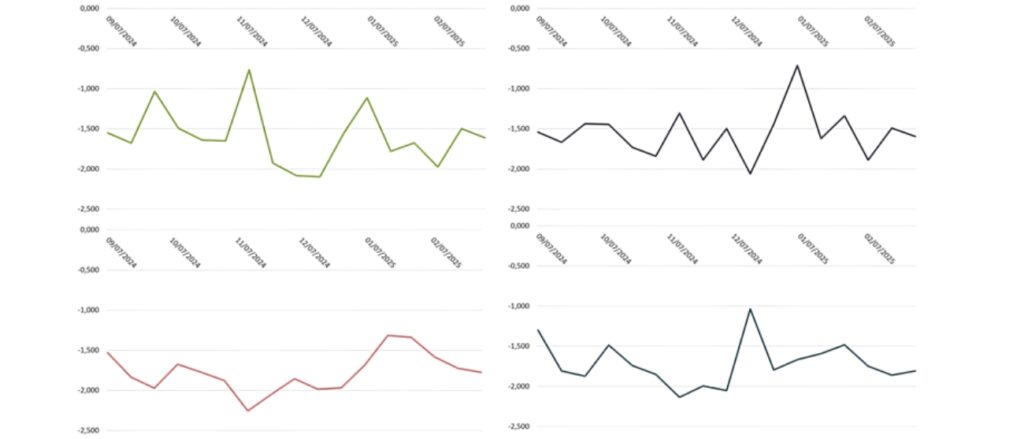

ON potential trend on structure

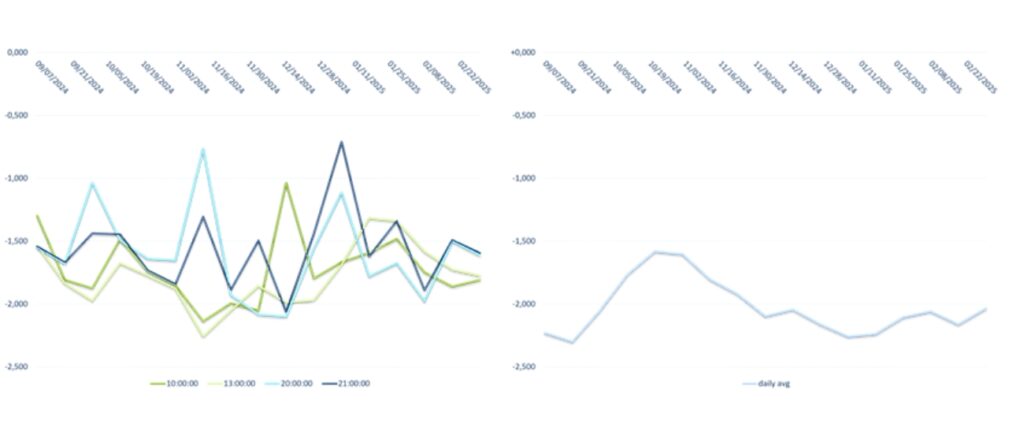

This graph shows four potential trends at four measurement points over six months (one measurement per week).

These measurements appear to belong to different cathodic protection systems, but in reality these curves derive from the exact same measurement point but relate to different times: we have the curve for 10:00, 13:00, 20:00 and 21:00 (in the figure below on the left). Therefore, this is what I get when I make a precise measurement with a certain periodicity. I lose track of everything that happens in the meantime: I cannot get clear information on the actual trend, which is what can be seen in the graph on the right.

Remote Data Logger Unit and Edge Computing

To overcome this problem, we need a tool that combines the features of a Remote Monitoring Unit (RMU) and a data logger: a Remote Data Logger Unit. This is a device that not only allows us to combine remote communication with high-frequency monitoring, but is also intelligent, highlighting only the key aspects of the information (indeed, there are constraints in terms of the amount of data that can be sent). The goal is to optimise transmission.

This goal can be achieved through edge computing: a computing model that processes information locally and sends only essential data to the Cloud (daily report). It is therefore a device that, like a data logger, can take one measurement per second at the site where it is placed. With this measurement frequency, at the end of the day, 86,400 measurements will be obtained: being a very high quantity, it is unthinkable to send them all, especially since the device runs on battery.

Therefore, the device processes this information and provides a summary, indicating:

- Daily minimum, average, maximum: where the average value is a consistent value derived from one measurement per second over the course of the day, making it possible to understand the actual trend (not as in the previous graph on the left).

- Statistical information: trend, i.e. the most frequent value measured within the 86,400 samples; standard deviation; and variability, to get an idea of how much the measurement varies throughout the day.

- Total time (seconds) below the minimum threshold and above the maximum threshold during the day: to have a range in which to consider the signal valid or invalid; in the latter case, there will be a series of alarms or conditions to pay attention to.

- Total number of exceedances of the minimum threshold during the day.

- Total number of exceedances of the maximum threshold during the day.

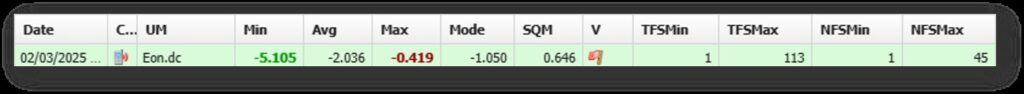

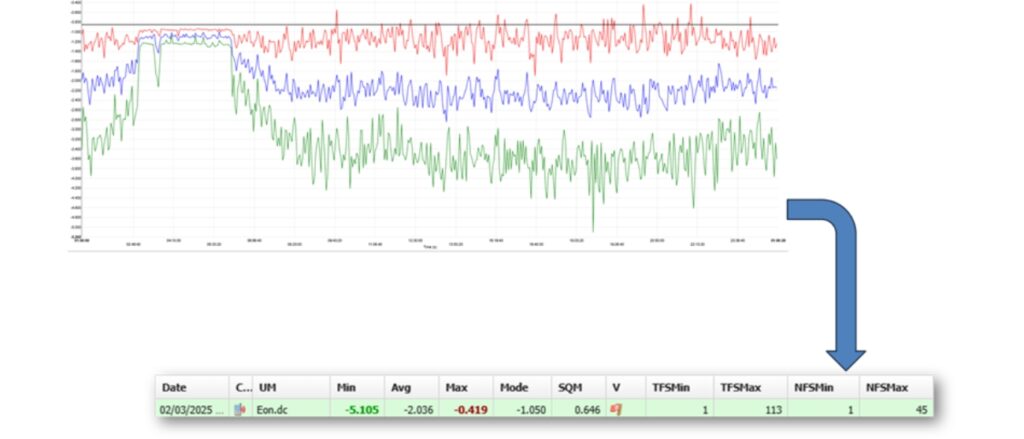

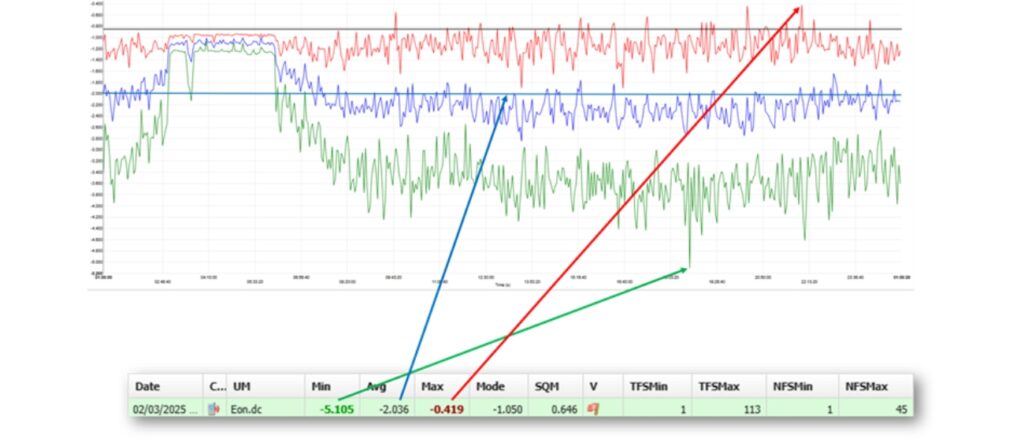

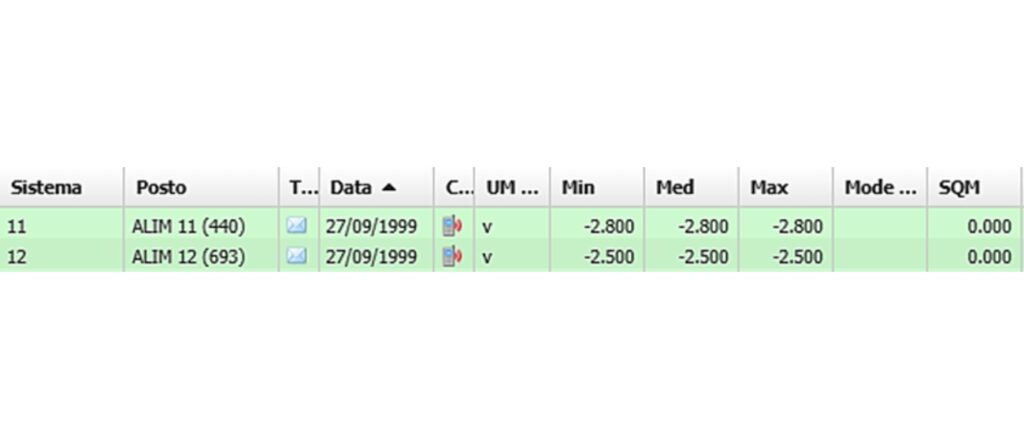

All this information, which is summarised in sets of numbers (see figure below), is contained in few kilobytes of data per day but tells the story of everything that happened over the 24 hours, and it will do so as long as the device is installed.

Read the daily report

Edge Computing

In the figure, we see in detail some values.

Min, avg, max

How can we transform the recording of 24 hours of data into a daily report?

First of all, we have the following information:

- Minimum value: the most negative value measured over 24 hours;

- Average value: given by the arithmetic mean of the samples taken over 24 hours;

- Maximum value: the most positive value measured over 24 hours.

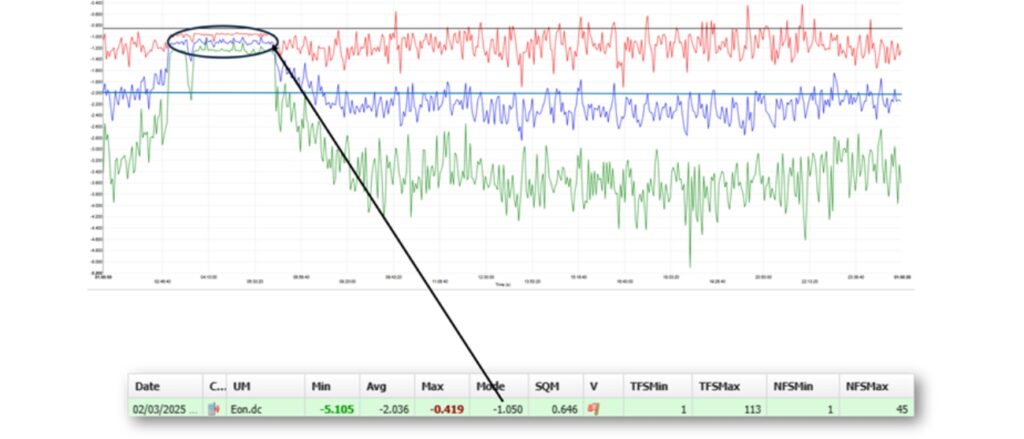

Moda

Arithmetically, trend is the most frequent value within a set of samples (86,400 seconds). Usually, mean and trend have similar values, but when we are faced with a non-stationary interference, such as at a railway crossing (see fig. below), the trend takes on a very particular meaning: during the night hours, we find a slightly more stable measurement range and, almost always, the trend value coincides exactly with the value during the night when the system is not interfered with. Indeed, it is more likely that a value will appear consistently multiple times within that range.

So, even in a condition where there is considerable variability, it is possible, from these few numbers, also extract information about what the potential is – in the absence of interference – relative to that measurement point.

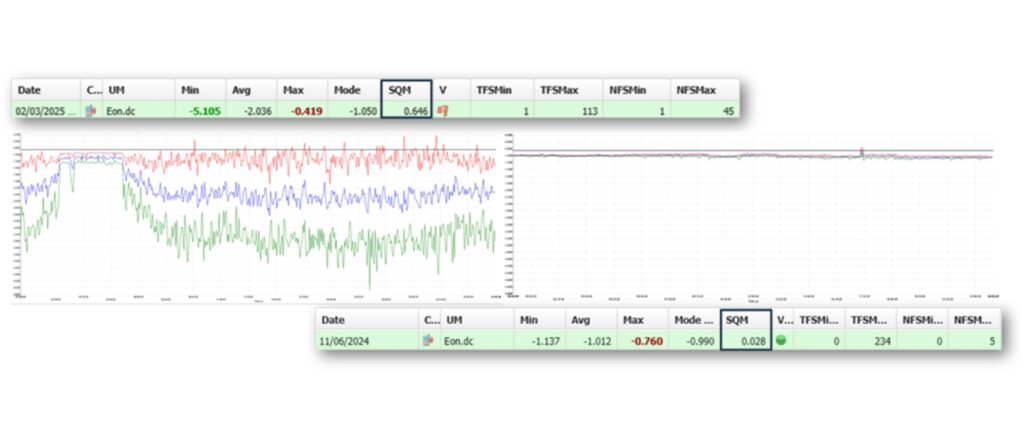

Standard deviation and variability

Looking at the type of graph in the figure below on the left, we would expect the standard deviation (or Mean Square Deviation, MSD) to be quite high. I could have measurements with similar minimum and maximum values, but perhaps due to a single interference that lasted only a few seconds.

This can be seen from the standard deviation value; indeed, this value indicates how stable my sample population was over the course of 24 hours. Therefore, even if I have rather wide minimum and maximum values as a range, if I realise that I have a low standard deviation (below 0.05), I know that in reality, throughout most of the day, my value has been close to the average value.

Time and number of alarms

The daily report also allows us to know how long we have been outside the limit conditions we have set.

The minimum out-of-threshold time and the minimum out-of-threshold number provide an overview of how many times you went below that value: in the case shown in the image below, the minimum out-of-threshold was reached once for 1 second. On the other hand, the maximum out-of-threshold time and the maximum out-of-threshold number show how many times one wentabove that value: in the case below, a total maximum out-of-threshold time of less than 2 minutes was reached in forty-five intervals. This, by the way, gives us an idea of the average time out of protection; in this case, we are around 2.5 seconds.

Why is it crucial? Because by takingcontinuous measurements, I canfind out everything that is happening, and I only need to look at this value to check whether the structure is at risk of corrosion. It is clear that in a condition of continuous cathodic protection, small intervals outside the protection levels do not entail an immediate risk of corrosion: it is up to the technician to decide and set the interval above which it is necessary to be alerted. In any case, in Italy, the regulation has established a maximum value of 3,600 non-continuous seconds.

According to ChatGPT, the term “Edge Computing” started to be known from 2014, but became commonly used around 2017. It is important to note this for a simple reason: everything we have seen so far is what has been done in Italy since 2001 as required by the UNI 10950 standard published that year.

In the chart below is the first daily report found in our database, which dates back to 1999, proving that we have been doing Edge Computing “without knowing it” for more than 25 years.

AUTOMA designs and produces innovative, Made in Italy hardware and software solutions for remote monitoring and control in the Oil, Gas and Water sectors.

We were born in 1987 in Italy, and today over 50,000 Automa devices are installed in more than 40 countries around the world.

Do you want to know the benefits for the security of your networks that you could have with the AUTOMA monitoring system for cathodic protection?

Contact our team without obligation and we will tell you what we can do to optimise your infrastructure control.

Product Manager at Automa S.r.l.

Product Manager at Automa S.r.l.

Electronics engineer, he is certified as a Senior Technician in cathodic protection and specialises in market analysis and industry standards. With more than 15 years of experience in remote cathodic protection monitoring and a patent on an intelligent reference electrode, Ivano is a member of the Board of Directors of Ceocor (European Committee for the Study of Corrosion and Protection of Piping Systems) and Delegate of AMPP Italy Chapter, as well as an active member of the ISO and AMPP standard working groups for cathodic protection.